Most organizations don’t have a technology problem.

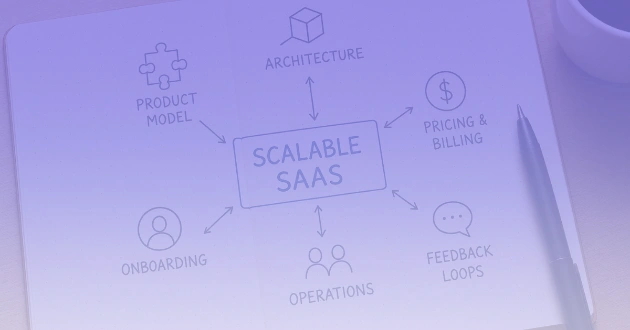

They have a visibility problem. Over time, tools accumulate. Teams adopt new platforms, legacy systems linger, and integrations multiply. What begins as a set of deliberate choices gradually turns into a patchwork of overlapping capabilities, hidden costs, and unclear ownership.

A structured tech stack audit is not just about cutting tools. It is about regaining control. Done well, it reveals how technology actually supports the business and where it quietly undermines it.

The challenge is doing this without disrupting operations or turning it into a months-long exercise. A focused 30-day audit is both realistic and sufficient if approached with discipline.

Why Tech Stack Audits Matter Now

Several forces have made periodic audits essential rather than optional.

First, SaaS proliferation has dramatically lowered the barrier to tool adoption. Teams can subscribe without central oversight, leading to duplication and fragmentation.

Second, cost structures have shifted. Subscription models create ongoing financial commitments that are often underestimated. What looks inexpensive at the team level becomes significant at scale.

Third, integration complexity has increased. APIs and middleware promise flexibility, but they also introduce dependencies that are rarely documented or reviewed.

Common mistakes during audits often stem from narrow framing. Many organizations treat the process purely as a cost-cutting exercise, or focus only on listing tools without understanding workflows. Shadow IT is frequently ignored, and the human cost of switching tools is underestimated.

A meaningful audit connects technology to outcomes, not just expenses.

1. Start with Business Capabilities, Not Tools

The most common failure in tech stack audits is starting with a list of applications.

Instead, begin with business capabilities. What does the organization need to do well? This could include areas such as lead generation, product development, customer support, or internal knowledge sharing.

Once these capabilities are defined, map the tools that support each one. This approach surfaces critical insights. It quickly becomes clear where multiple tools support the same function, where capabilities are fragmented, and where tools exist without a clear business purpose.

For example, many organizations discover they are using several analytics platforms across different teams. Each tool may be valuable in isolation, but together they create inconsistent metrics and competing interpretations of performance.

A capability-first view shifts the conversation from tools to outcomes. It reframes decisions around effectiveness rather than familiarity or habit.

2. Expose Hidden Costs Beyond Subscriptions

Subscription fees are the most visible cost, but rarely the most important.

A proper audit examines the full cost of ownership. This includes the time engineers spend maintaining integrations, the effort required to onboard new employees, and the inefficiencies created by fragmented workflows.

In many cases, the real cost of a tool is embedded in how it is used. A set of loosely connected systems may appear affordable, but if teams are duplicating data entry or manually reconciling reports, the operational cost becomes significant.

A useful shift is to evaluate cost at the level of workflows rather than individual tools. When viewed this way, consolidation often becomes more attractive, not because of license savings, but because it simplifies execution and reduces friction across teams.

3. Identify Redundancy and Fragmentation Patterns

Redundancy rarely appears as exact duplication. More often, it emerges as partial overlap between tools that were adopted at different times for slightly different needs.

This creates fragmentation. Teams may rely on different project management systems, communication platforms, or data repositories, each optimized locally but misaligned globally.

The risk is not just inefficiency. Fragmentation slows decision-making, complicates onboarding, and reduces confidence in data.

However, not all redundancy is wasteful. Some overlap is justified, particularly when teams have distinct workflows or regulatory constraints. The goal is not to eliminate variation, but to ensure it is intentional.

A practical approach is to distinguish between necessary overlap and accidental complexity. Only the latter should be actively reduced.

4. Evaluate Integration Health and Data Flow

A modern tech stack is defined less by individual tools and more by how they interact.

Integrations are often implemented quickly to solve immediate problems, with little attention to long-term resilience. Over time, this creates fragile systems that depend on undocumented connections.

During an audit, it is important to map how data flows across the organization. Where are the critical handoffs? Which processes depend on automated integrations, and which rely on manual workarounds?

This exercise frequently reveals several recurring issues:

- Single points of failure that are not actively monitored

- Redundant or unused integrations that add complexity

- Manual workarounds that indicate deeper structural problems

Improving integration health often delivers more value than introducing new tools. It stabilizes operations and increases trust in the underlying data.

5. Align Ownership and Governance

Technology decisions without clear ownership tend to degrade over time.

Many organizations lack formal governance for their tech stack. Tools are adopted informally, ownership is unclear, and there is no consistent process for reviewing or retiring systems.

This leads to gradual sprawl. Costs increase, usage declines, and no one is accountable for outcomes.

Establishing governance does not require heavy processes. It requires clarity. Each tool should have a defined owner, a clear purpose, and a basic review cadence.

When ownership is explicit, decisions become easier. Tools are evaluated based on performance, not inertia.

A Practical 30-Day Audit Approach

A structured timeline helps maintain focus and prevents the audit from becoming an open-ended exercise.

In the first week, the goal is discovery. This involves building a comprehensive inventory of tools, identifying stakeholders, and mapping tools to business capabilities.

The second week focuses on analysis. At this stage, usage patterns, costs, and overlaps are examined. Integration points and data flows are mapped to understand how systems interact.

During the third week, the emphasis shifts to evaluation. Tools are assessed against business needs, and areas of inefficiency or risk are identified. This is where priorities begin to emerge.

The final week is dedicated to action planning. Decisions are made about which tools to retain, consolidate, or retire. Governance mechanisms are defined to ensure the stack remains aligned over time.

The value of this approach lies in its momentum. Each phase builds toward decisions, not just insights.

What Actually Matters in a Tech Stack Audit

A successful audit is not defined by how many tools are removed. It is defined by how clearly the tech stack supports the way the organization operates.

The most important outcomes are improved alignment, reduced friction, and better visibility into how work gets done. Cost savings may follow, but they are a byproduct rather than the primary objective.

In practice, the most effective audits lead to simpler workflows, clearer ownership, and more reliable data. These are the factors that ultimately improve execution.

Rethinking Your Stack Going Forward

Technology stacks rarely fail all at once. They drift.

Each new tool, integration, or workaround makes sense in isolation. Over time, however, the system becomes harder to understand and manage.

A 30-day audit is not just a cleanup exercise. It is a reset point.

It creates an opportunity to realign technology with how the organization actually operates today, not how it operated when the stack was first assembled.

The more important question is not how many tools you have, but whether they still reflect how your business creates value now.